Thoughts on building marketing & growth capabilities

Using Keyword Rankings In SEO

A few weeks ago I gave a talk at an SEO Meetup in San Francisco. It was a great opportunity to get some more feedback on a product/tool that I’m working on (and that we are already using at Postmates). You’ll hear more on this in the upcoming months (hopefully). In a previous blog post […]

20 Reasons Why Most Experiment Programs Are Setup for Failure

Over the course of the last few years I worked on over 200+ experiments, from a simple change to a Call To Action (CTA) up to complete design overhauls and full feature integrations into products. So far it taught me a lot about how to set up an experiment program and what you can mess […]

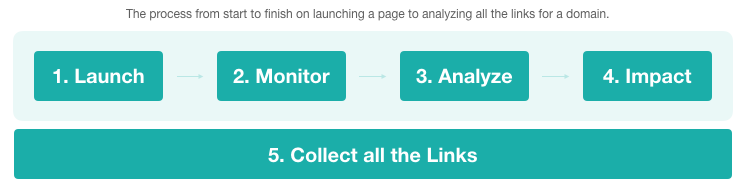

Measuring SEO Progress: From Start to Finish – Part 2: From Creation to Getting Links

How to measure (and over time forecast) the impact of features that you’re building for SEO and how to measure this from start to finish. In this series I already provided some more information on how to measure progress: from creation to traffic (part 1). This blog post (part 2) will go deeper into another […]

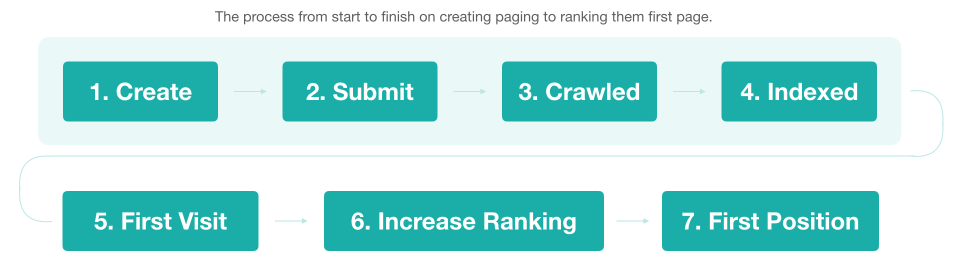

Measuring SEO Progress: From Start to Finish – Part 1: Receiving Traffic

How to measure (and over time forecast) the impact of features that you’re building for SEO and how to measure this from start to finish. A topic that I’ve been thinking about a lot for the last few months is. It’s hard, as most of the actual work that we do can’t be measured easily […]

What tools am I using for SEO?

A while back somebody posted the SEO platforms/vendors/tools that he was using at his agency job (as an SEO). Me missing some great tools in there decided to respond but it also got me thinking about my own toolset and decided to dedicate a blog post to it, to get better recommendations and learn from […]

From 99% ‘duplicate content’ to 15 editors and back to ‘duplicate content’

Duplicate content is (according to questions from new SEOs and people in online marketing) still one of the biggest issues in Search Engine Optimization. I’ve got news for you, it for sure isn’t as there are plenty of other issues. But somehow it still always comes up to the surface when talking about SEO. As […]

Retrieving Search Analytics Data from the Google Search Console API for Bulk Keyword Research

Last year I blogged about using 855 properties to retrieve all your Search Analytics data. Just after that Google luckily released that the limits on the API to retrieve only the top 5000 results had been lifted. Since then it’s been possible to potentially pull all your keywords from Google Search Console via their API […]

Making a move … what’s next!?

Last week was my last one at The Next Web as their Director of Marketing. For the last four years I’ve worked alongside great people: publishing the best content (TNW), organising the best + biggest tech conferences (TNW Conferences), selling the craziest drones (TNW Deals), creating the most beautiful workspace (TQ) and them who collect […]

Why giving back is so important, help out

Getting more experience can be hard when you’re just starting with your career. You’re either trying to hope to get into an internship, your first job or if you’re making a career move you just need the experience to keep up. But you’re living in a great time to get there as there are many […]

Introducing: the Google Tag Manager & Google Analytics for AMP – WordPress Plugin

Today it’s time to make it easier for sites running AMP on WordPress to track & measure their web site traffic. Over the past weeks I’ve been working on a WordPress Plugin which supports adding Google Tag Manager and Google Analytics to your AMP pages. As AMP itself is quite a hard new platform to […]